The surreal fact in the human tragedy in Dallas is that the sniper who murdered five police officers was not killed by another officer, but by a mechanical robot. This conjures science fiction images of killer robots deployed against man. It's not altogether reassuring.

Technically, of course, the actual killing officer was not the robot, but the man operating the robot. The human element was very much in play, detonating an explosive carried by the robot. Sci-fi buffs might draw disturbing analogies to movies such as "Robocop," the popular 1987 film set in a near-future dystopian world in which a police officer brutally murdered by a gang of thugs is refitted as a "cyborg" -- a cybernetic creature part human and part mechanical -- to use his powers to protect civilization.

Robots in real life have been used in many dangerous situations to detect and defuse bombs or to detonate pipe bombs in suspicious places. And the common, but deadly, C4 plastic explosive attached to the Dallas robot has been widely used in military combat.

Though the use of this robot to kill in a police operation raises serious moral issues, machines have been employed this way in the past. The tank gave the first soldier to use it an enormous advantage over the soldier who didn't have one. The first repeating rifle, the machine gun, afforded enormous mechanical advantages.

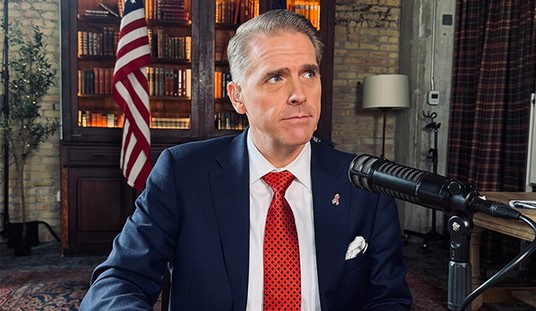

"I approved it. And I'll do it again if presented with the same circumstances," Dallas Police Chief David Brown tells CNN News in defense of using the new technology to kill Micah Xavier Johnson, the 25-year-old Army veteran of Afghanistan with whom he negotiated in hopes of persuading him to give himself up before he killed more policemen. "He just basically lied to us," the chief says, "playing games, laughing at us, singing, asking how many (cops) did he get, and that he wanted to kill some more, and there were bombs there. So there was no progress on the negotiation."

Recommended

Nor was it clear whether the killer would decide quickly to charge the thin blue line, as he was threatening to do. He might have been bluffing, but in the moment the chief could not know that.

Without faulting the decision of the Dallas police, Mike Masnick, editor of Techdirt, a blog that analyzes high-tech issues as they relate to business and government policies, suggests thinking of different ways to use robots in dangerous situations. "If we're going to enter the age of robocop, shouldn't we be looking for ways to use such robotic devices in a manner that would help capture suspects alive, rather than dead?"

Others have asked whether a less lethal alternative, perhaps riot gas, could have been used to subdue Micah Johnson. Chief Brown doesn't want to hear criticism of the use of the robot in Dallas from armchair observers. "You have to be on the ground," he says.

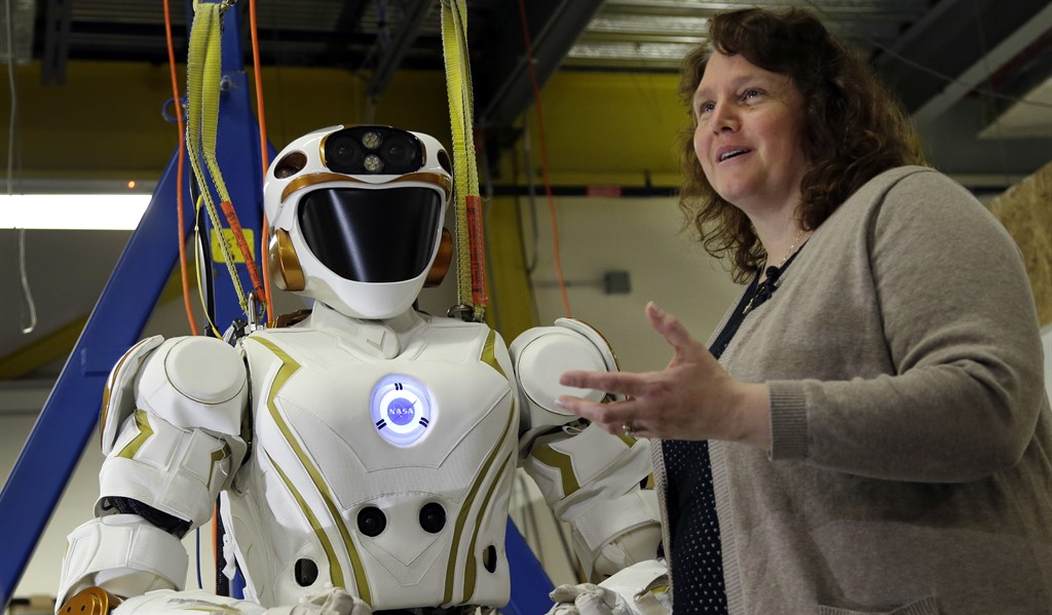

Chief Brown gets no argument here. The robot probably saved the lives of other cops. He was no R2-D2, the endearing droid in "Star Wars," who rescued humans in peril with a certain mechanical heroism. The robot that blew up the cop killer was an ungainly contraption, with a claw-and-arm extension ready to destroy a man determined to kill more police officers.

So the lionhearted Achilles of classical times has been replaced by a Northrop Grumman Remotec Andros F-5, and our moral vocabulary, along with frightened sensibilities, requires an update. We need to think not only about how to disarm the assassin in our midst, but how to talk about what is happening. The polarizing arguments and the quick rush to simplistic judgment never serve us well.

Thoughtful deliberation and understanding are scarce and unappreciated in a media where 24/7 visuals catch real-life violence in real time. When social media captures violent images without context, it supplies only a partial picture. Videos of the deaths of black men in Minnesota and Louisiana shocked the nation, and they triggered real-life protest against the white policemen who shot them. The rush to judgment was rash and prejudicial. It set off uncontrolled violence and the inevitable cries of racism.

Roland Fryer, an economics professor at Harvard, studied a thousand police shootings by 10 major police departments, and found no racial bias in the shootings. The professor, who is black, called the counterintuitive finding "the most surprising result in my career." It's hardly the last word, but it does say a lot for thoughtful deliberation and careful understanding. Robots can't do that. Neither can humans indulging in thinking like robots.

Join the conversation as a VIP Member